RedHat EL5 安装Oracle 10g RAC之--CRS 安装

RedHat EL5 安装Oracle 10g RAC之--CRS 安装

系统环境:

操作系统:RedHat EL5

Cluster: Oracle CRS 10.2.0.1.0

Oracle: Oracle 10.2.0.1.0

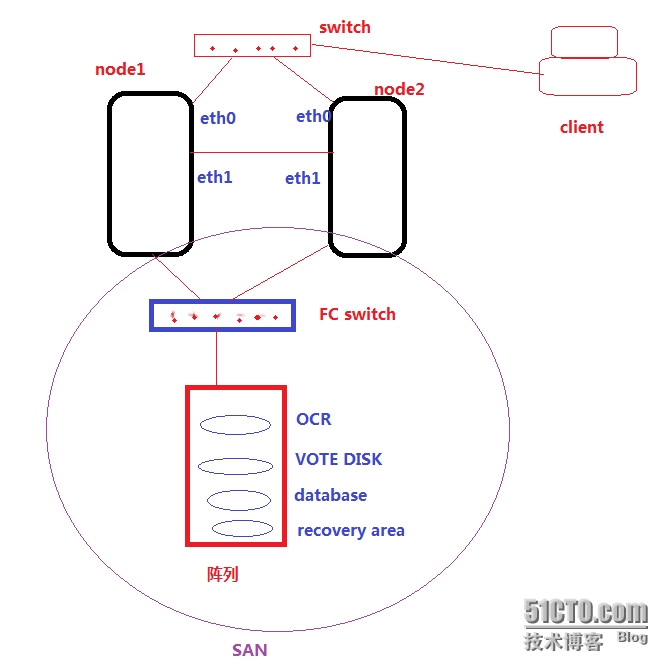

如图所示:RAC 系统架构

二、CRS 安装

Cluster Ready Service是Oracle 构建RAC,负责集群资源管理的软件,在搭建RAC中必须首先安装.

安装需采用图形化方式,以Oracle用户的身份安装(在node1上):

注意:修改安装配置文件,增加redhat-5的支持

[oracle@node1 install]$ pwd

/home/oracle/cluster/install

[oracle@node1 install]$ ls

addLangs.sh images oneclick.properties oraparamsilent.ini response

addNode.sh lsnodes oraparam.ini resource unzip

[oracle@node1 install]$ vi oraparam.ini

[Certified Versions]

Linux=redhat-3,SuSE-9,redhat-4,redhat-5,UnitedLinux-1.0,asianux-1,asianux-2

[oracle@node1 cluster]$./runInstaller

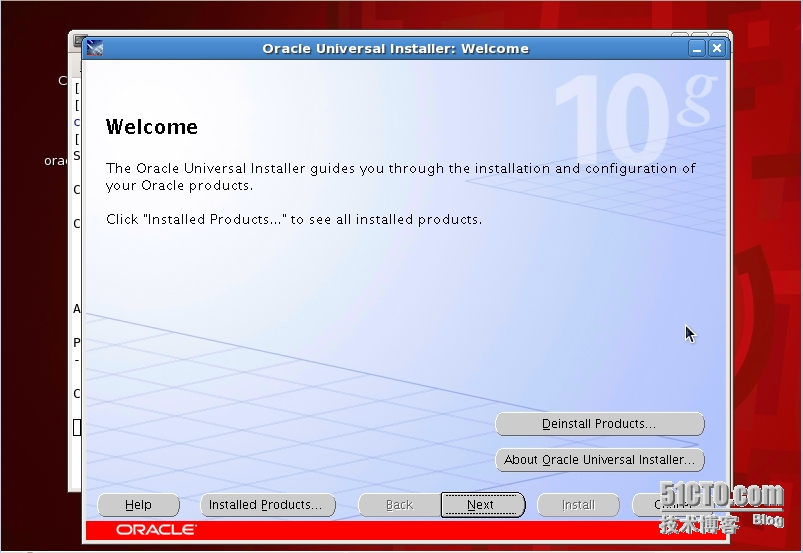

欢迎界面

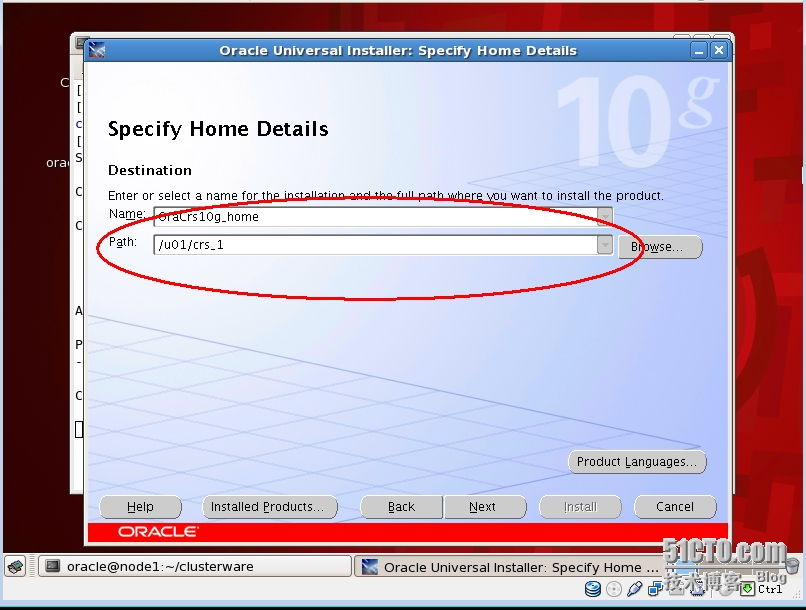

注意安装CRS的主目录,不能和Oracle软件的目录一致,需单独在另一个目录

[oracle@node1 ~]$ ls -l /u01

total 24

drwxr-xr-x 3 oracle oinstall 4096 May 5 17:04 app

drwxr-xr-x 36 oracle oinstall 4096 May 7 11:08 crs_1

drwx------ 2 oracle oinstall 16384 May 4 15:59 lost+found

[oracle@node1 ~]$

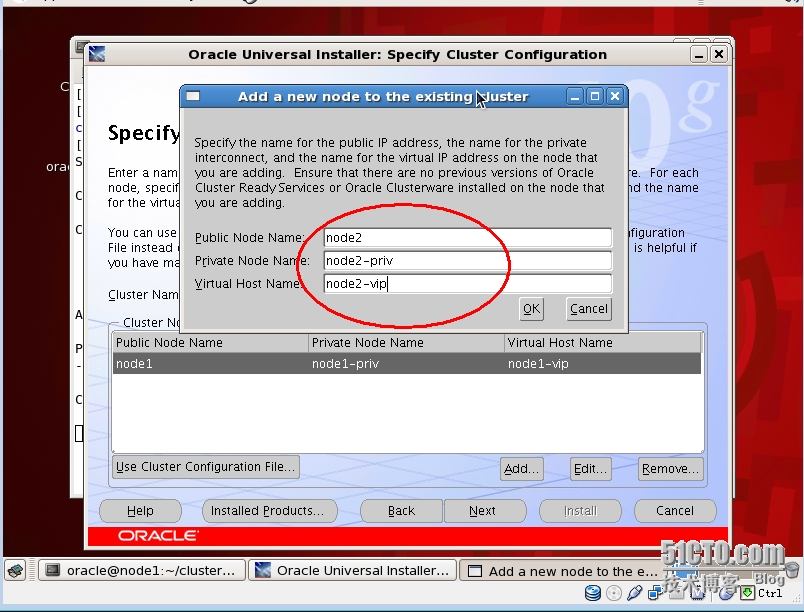

添加节点(如果主机间信任关系配置有问题,这里就无法发现node 2)

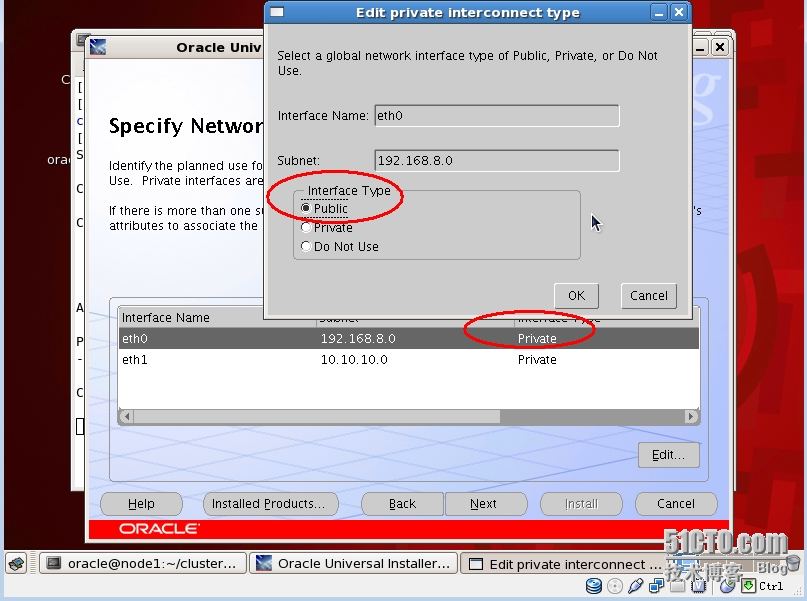

修改public 网卡属性(public 网卡用于和Client 通讯)

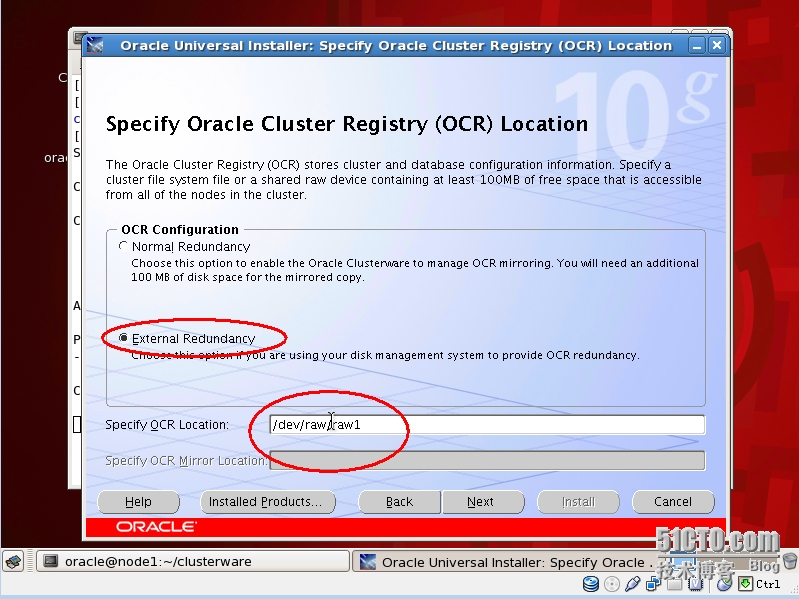

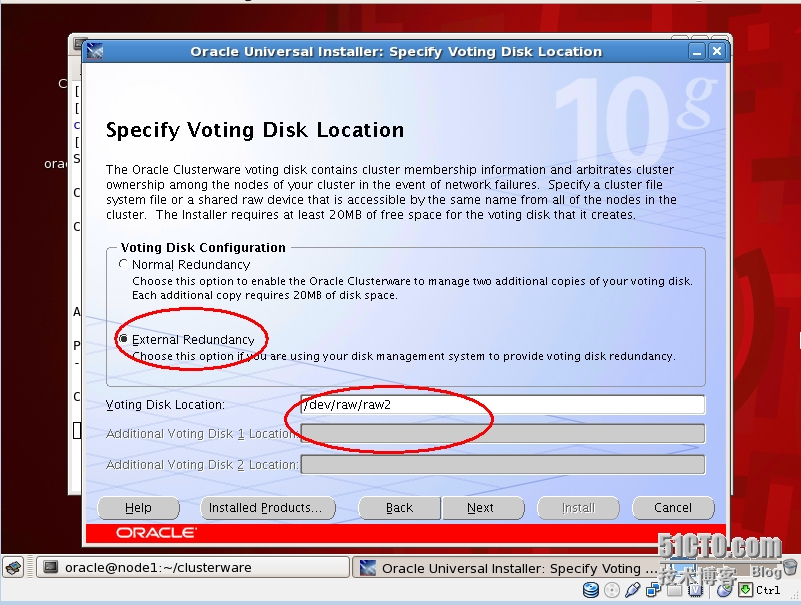

OCR必须采用RAW设备(Exteneral Redundancy只需一个RAW,安装后可以添加mirror)

VOTE DISK必须采用RAW设备(Exteneral Redundancy只需一个RAW,安装后可以添加多个raw构成冗余)

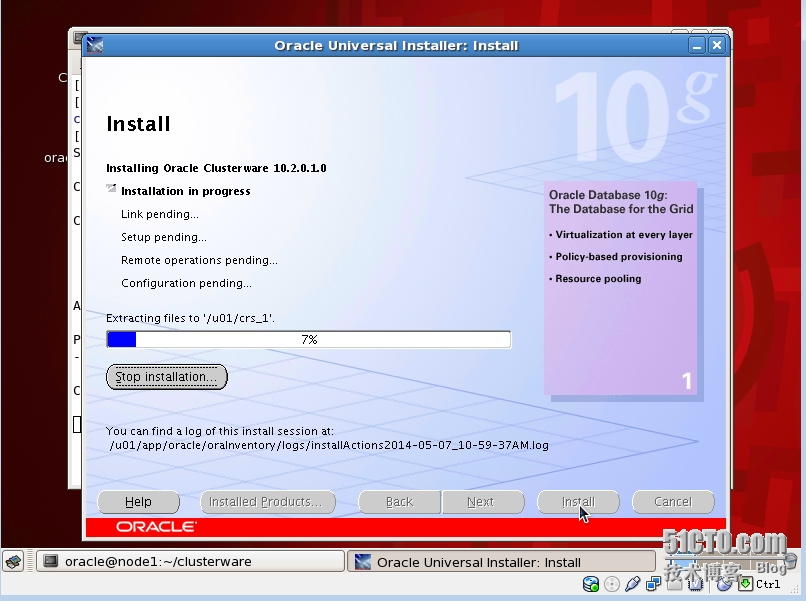

开始安装(并将安装软件传送到node2)

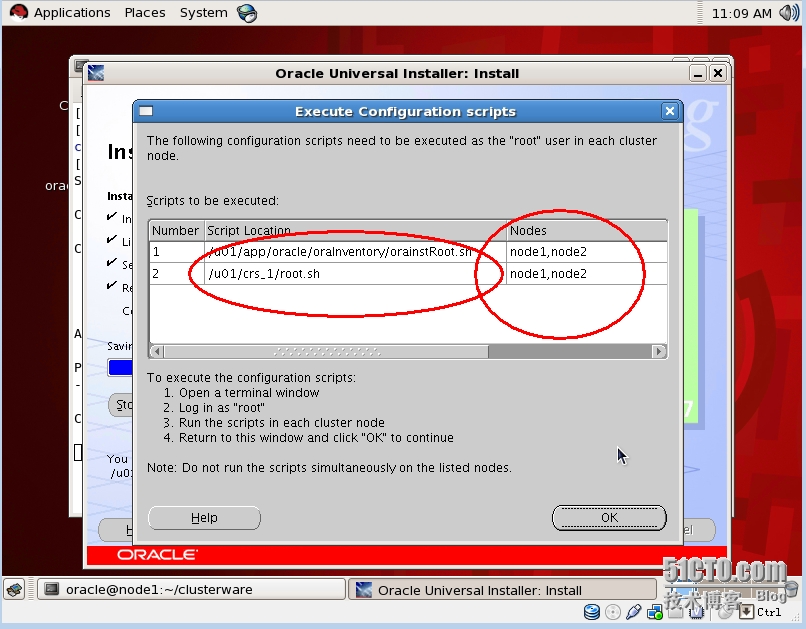

安装提示分别在两个节点按顺序执行script

node1:

[root@node1 ~]# /u01/app/oracle/oraInventory/orainstRoot.sh

Changing permissions of /u01/app/oracle/oraInventory to 770.

Changing groupname of /u01/app/oracle/oraInventory to oinstall.

The execution of the script is complete

node2:

[root@node2 ~]# /u01/app/oracle/oraInventory/orainstRoot.sh

Changing permissions of /u01/app/oracle/oraInventory to 770.

Changing groupname of /u01/app/oracle/oraInventory to oinstall.

The execution of the script is complete

node1:

[root@node1 ~]# /u01/crs_1/root.sh

WARNING: directory ‘/u01‘ is not owned by root

Checking to see if Oracle CRS stack is already configured

/etc/oracle does not exist. Creating it now.

Setting the permissions on OCR backup directory

Setting up NS directories

Oracle Cluster Registry configuration upgraded successfully

WARNING: directory ‘/u01‘ is not owned by root

assigning default hostname node1 for node 1.

assigning default hostname node2 for node 2.

Successfully accumulated necessary OCR keys.

Using ports: CSS=49895 CRS=49896 EVMC=49898 and EVMR=49897.

node <nodenumber>: <nodename> <private interconnect name> <hostname>

node 1: node1 node1-priv node1

node 2: node2 node2-priv node2

Creating OCR keys for user ‘root‘, privgrp ‘root‘..

Operation successful.

Now formatting voting device: /dev/raw/raw2

Format of 1 voting devices complete.

Startup will be queued to init within 90 seconds.

Adding daemons to inittab

Expecting the CRS daemons to be up within 600 seconds.

CSS is active on these nodes.

node1

CSS is inactive on these nodes.

node2

Local node checking complete.

Run root.sh on remaining nodes to start CRS daemons.

node1执行成功!

node2:

[root@node2 ~]# /u01/crs_1/root.sh

WARNING: directory ‘/u01‘ is not owned by root

Checking to see if Oracle CRS stack is already configured

/etc/oracle does not exist. Creating it now.

Setting the permissions on OCR backup directory

Setting up NS directories

Oracle Cluster Registry configuration upgraded successfully

WARNING: directory ‘/u01‘ is not owned by root

clscfg: EXISTING configuration version 3 detected.

clscfg: version 3 is 10G Release 2.

assigning default hostname node1 for node 1.

assigning default hostname node2 for node 2.

Successfully accumulated necessary OCR keys.

Using ports: CSS=49895 CRS=49896 EVMC=49898 and EVMR=49897.

node <nodenumber>: <nodename> <private interconnect name> <hostname>

node 1: node1 node1-priv node1

node 2: node2 node2-priv node2

clscfg: Arguments check out successfully.

NO KEYS WERE WRITTEN. Supply -force parameter to override.

-force is destructive and will destroy any previous cluster

configuration.

Oracle Cluster Registry for cluster has already been initialized

Startup will be queued to init within 90 seconds.

Adding daemons to inittab

Expecting the CRS daemons to be up within 600 seconds.

CSS is active on these nodes.

node1

node2

CSS is active on all nodes.

Waiting for the Oracle CRSD and EVMD to start

Waiting for the Oracle CRSD and EVMD to start

Oracle CRS stack installed and running under init(1M)

Running vipca(silent) for configuring nodeapps

/u01/crs_1/jdk/jre//bin/java: error while loading shared libraries: libpthread.so.0: cannot open shared object file: No such file or directory

出现以上错误,解决方法:

[root@node2 bin]# vi vipca

Linux) LD_LIBRARY_PATH=$ORACLE_HOME/lib:$ORACLE_HOME/srvm/lib:$LD_LIBRARY_PATH

export LD_LIBRARY_PATH

#Remove this workaround when the bug 3937317 is fixed

arch=`uname -m`

if [ "$arch" = "i686" -o "$arch" = "ia64" ]

then

LD_ASSUME_KERNEL=2.4.19

export LD_ASSUME_KERNEL

fi

unset LD_ASSUME_KERNEL (添加此行信息)

#End workaround

[root@node2 bin]# vi srvctl

LD_ASSUME_KERNEL=2.4.19

export LD_ASSUME_KERNEL

unset LD_ASSUME_KERNEL(添加此行信息)

在node 2重新执行root.sh:

注意:root.sh只能执行一次,如果再次执行,需执行rootdelete.sh

[root@node2 bin]# /u01/crs_1/root.sh

WARNING: directory ‘/u01‘ is not owned by root

Checking to see if Oracle CRS stack is already configured

Oracle CRS stack is already configured and will be running under init(1M)

[root@node2 bin]# cd ../install

[root@node2 install]# ls

cluster.ini install.incl rootaddnode.sbs rootdelete.sh templocal

cmdllroot.sh make.log rootconfig rootinstall

envVars.properties paramfile.crs rootdeinstall.sh rootlocaladd

install.excl preupdate.sh rootdeletenode.sh rootupgrade

[root@node2 install]# ./rootdelete.sh

CRS-0210: Could not find resource ‘ora.node2.LISTENER_NODE2.lsnr‘.

CRS-0210: Could not find resource ‘ora.node2.ons‘.

CRS-0210: Could not find resource ‘ora.node2.vip‘.

CRS-0210: Could not find resource ‘ora.node2.gsd‘.

Shutting down Oracle Cluster Ready Services (CRS):

Stopping resources.

Successfully stopped CRS resources

Stopping CSSD.

Shutting down CSS daemon.

Shutdown request successfully issued.

Shutdown has begun. The daemons should exit soon.

Checking to see if Oracle CRS stack is down...

Oracle CRS stack is not running.

Oracle CRS stack is down now.

Removing script for Oracle Cluster Ready services

Updating ocr file for downgrade

Cleaning up SCR settings in ‘/etc/oracle/scls_scr‘

[root@node2 install]#

node 2 再次出错:

[root@node2 install]# /u01/crs_1/root.sh

WARNING: directory ‘/u01‘ is not owned by root

Checking to see if Oracle CRS stack is already configured

Setting the permissions on OCR backup directory

Setting up NS directories

Oracle Cluster Registry configuration upgraded successfully

WARNING: directory ‘/u01‘ is not owned by root

clscfg: EXISTING configuration version 3 detected.

clscfg: version 3 is 10G Release 2.

assigning default hostname node1 for node 1.

assigning default hostname node2 for node 2.

Successfully accumulated necessary OCR keys.

Using ports: CSS=49895 CRS=49896 EVMC=49898 and EVMR=49897.

node <nodenumber>: <nodename> <private interconnect name> <hostname>

node 1: node1 node1-priv node1

node 2: node2 node2-priv node2

clscfg: Arguments check out successfully.

NO KEYS WERE WRITTEN. Supply -force parameter to override.

-force is destructive and will destroy any previous cluster

configuration.

Oracle Cluster Registry for cluster has already been initialized

Startup will be queued to init within 90 seconds.

Adding daemons to inittab

Expecting the CRS daemons to be up within 600 seconds.

CSS is active on these nodes.

node1

node2

CSS is active on all nodes.

Waiting for the Oracle CRSD and EVMD to start

Oracle CRS stack installed and running under init(1M)

Running vipca(silent) for configuring nodeapps

Error 0(Native: listNetInterfaces:[3])

[Error 0(Native: listNetInterfaces:[3])]

解决方法:(配置网络)

[root@node2 bin]# ./oifcfg iflist

eth0 192.168.8.0

eth1 10.10.10.0

[root@node2 bin]# ./oifcfg getif

[root@node2 bin]# ./oifcfg setif -global eth0/192.168.8.0:public

[root@node2 bin]# ./oifcfg setif -global eth1/10.10.10.0:cluster_interconnect

[root@node2 bin]# ./oifcfg getif

eth0 192.168.8.0 global public

eth1 10.10.10.0 global cluster_interconnect

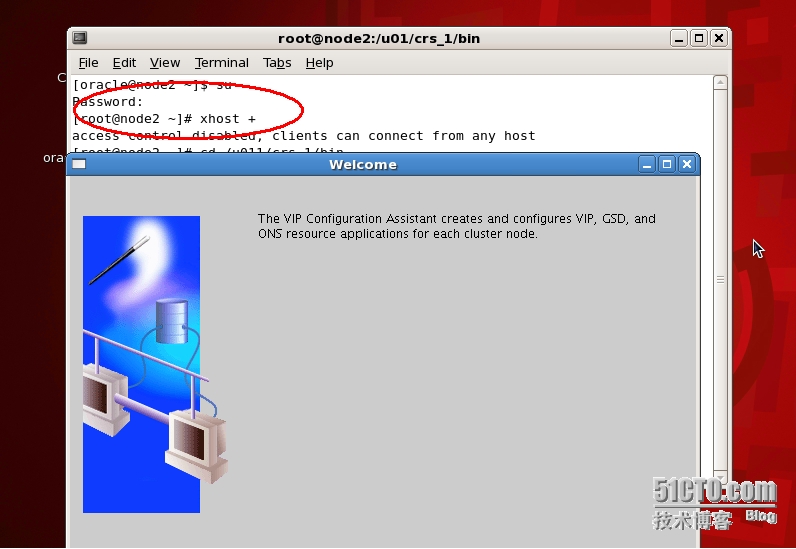

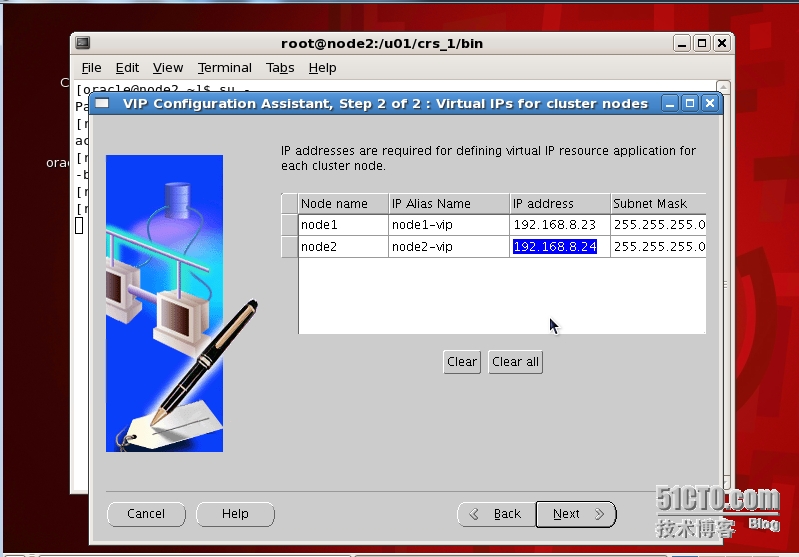

并在node2上执行VIPCA:

以root身份执行vipca(在/u01/crs_1/bin)

配置信息应和/etc/hosts文件一致

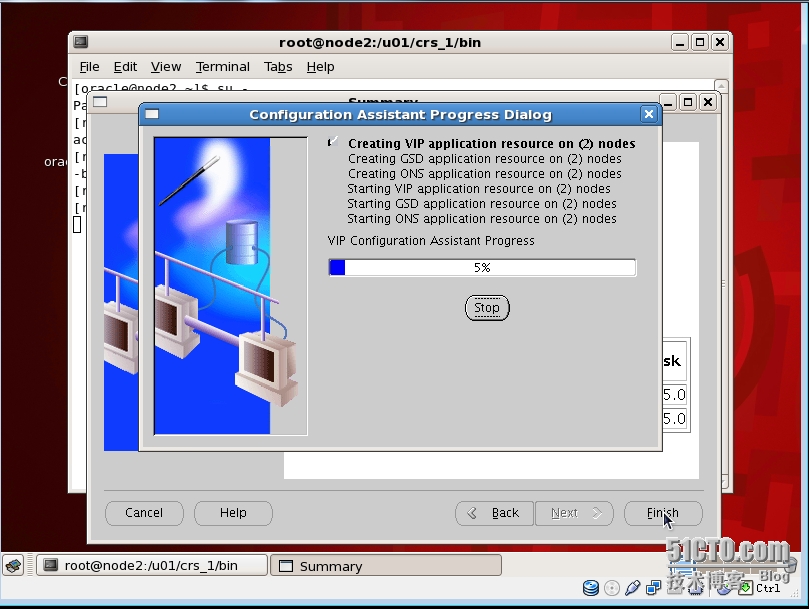

开始配置

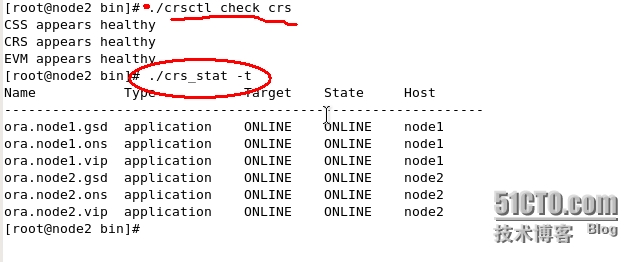

vipca配置成功后,crs服务正常工作

安装完成!

验证CRS:

[root@node2 bin]# crs_stat -t

Name Type Target State Host

------------------------------------------------------------

ora.node1.gsd application ONLINE ONLINE node1

ora.node1.ons application ONLINE ONLINE node1

ora.node1.vip application ONLINE ONLINE node1

ora.node2.gsd application ONLINE ONLINE node2

ora.node2.ons application ONLINE ONLINE node2

ora.node2.vip application ONLINE ONLINE node2

[root@node1 ~]# crs_stat -t

Name Type Target State Host

------------------------------------------------------------

ora.node1.gsd application ONLINE ONLINE node1

ora.node1.ons application ONLINE ONLINE node1

ora.node1.vip application ONLINE ONLINE node1

ora.node2.gsd application ONLINE ONLINE node2

ora.node2.ons application ONLINE ONLINE node2

ora.node2.vip application ONLINE ONLINE node2

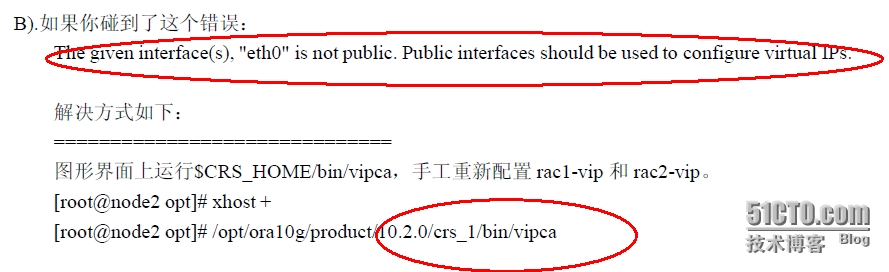

附:错误案例

如果在运行root.sh时出现以下错误:

在出现错误的节点上运行(root)vipca 解决!

@至此CRS安装成功!

本文出自 “天涯客的blog” 博客,请务必保留此出处http://tiany.blog.51cto.com/513694/1408023

郑重声明:本站内容如果来自互联网及其他传播媒体,其版权均属原媒体及文章作者所有。转载目的在于传递更多信息及用于网络分享,并不代表本站赞同其观点和对其真实性负责,也不构成任何其他建议。